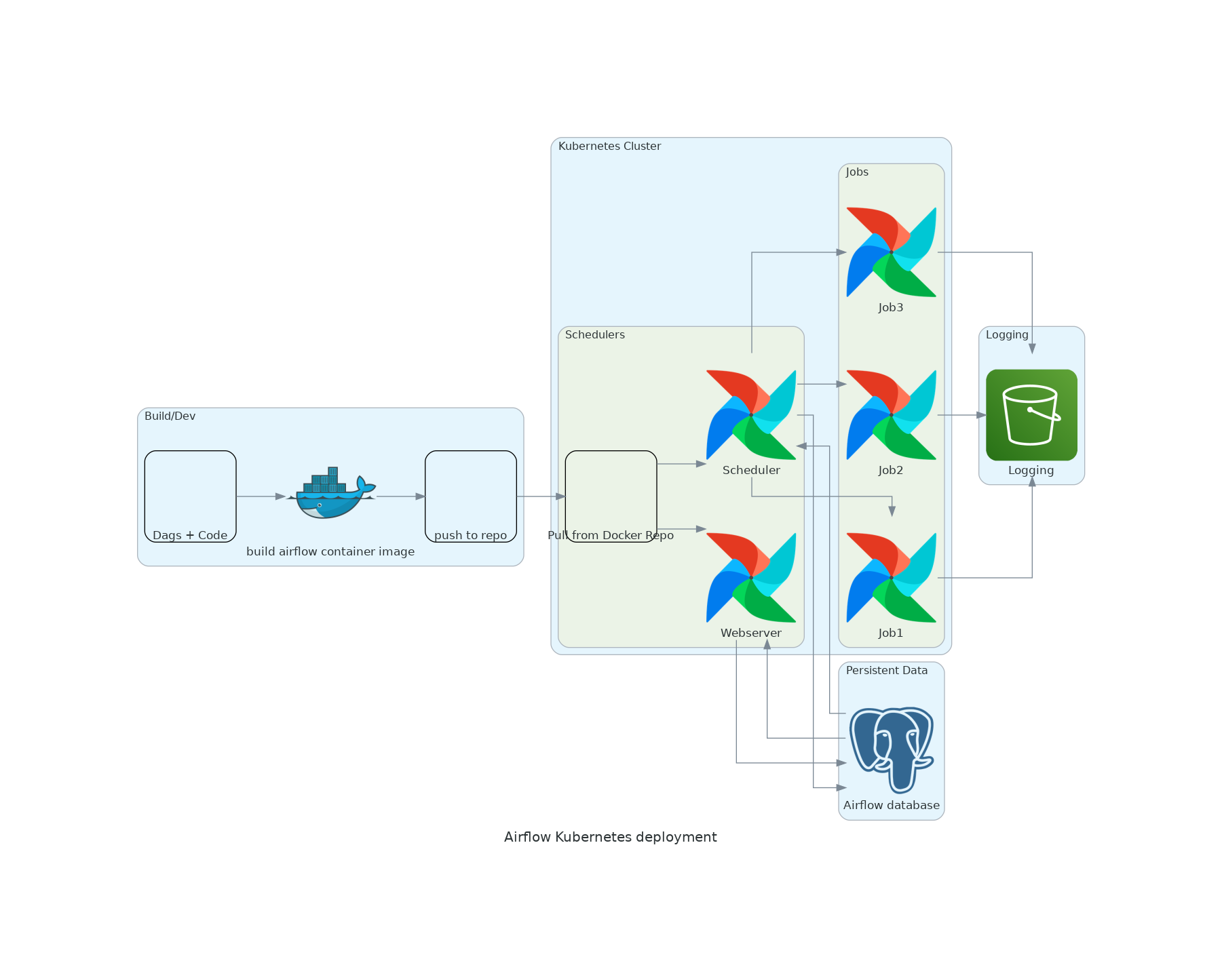

This might be desired in some use cases that require auto-scaling, but it's not ideal for environments with a high volume of shorter running tasks. In contrast, the Kubernetes executor is implemented at the configuration level of the Airflow instance, which means all tasks run in their own Kubernetes Pod. The KubernetesPodOperator defines one isolated Airflow task.The KubernetesPodOperator requires a Docker image to be specified, while the Kubernetes executor doesn't.The following are the primary differences between the KubernetesPodOperator and the Kubernetes executor: To configure the Kubernetes executor, see Kubernetes Executor. It does not affect any other tasks in the Airflow instance. The KubernetesPodOperator launches only its own task in a Kubernetes Pod with its own configuration. As the name suggests, the Kubernetes executor affects how all tasks in an Airflow instance are executed. The Kubernetes executor and the KubernetesPodOperator both dynamically launch and terminate Pods to run Airflow tasks. Running tasks with specific Node (a virtual or physical machine in Kubernetes) constraints, such as only running on Nodes located in the European Union.Ī comparison of the KubernetesPodOperator and the Kubernetes executor Įxecutors determine how your Airflow tasks are executed.Running tasks that use a version of Python not supported by your Airflow environment.Executing tasks in a separate environment with individual packages and dependencies.Having full control over how much compute resources and memory a single task can use.This guide includes an example of how to run a Haskell script with the KubernetesPodOperator. Running a task in a language other than Python.The KubernetesPodOperator runs any Docker image provided to it. By default, microk8s runs pods in the default namespace. Run microk8s.kubectl get pods -n $namespace or microk8s.kubectl logs -n $namespace to examine the logs for the pod that just ran. In_cluster = in_cluster, # if set to true, will look in the cluster, if false, looks for fileĬluster_context = "docker-desktop", # is ignored when in_cluster is set to True

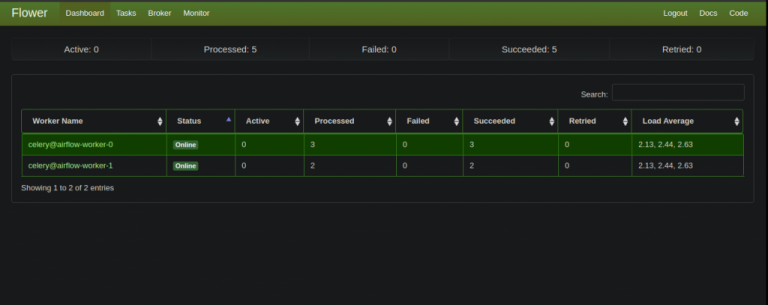

# environment namespace when deployed to Astronomer.Ĭonfig_file = "/usr/local/airflow/include/.kube/config"ĭag_id = "example_kubernetes_pod", schedule =, default_args = default_args # This will detect the default namespace locally and read the "retry_delay" : duration ( minutes = 5 ) , See Getting Started With the Official Airflow Helm Chart. Alternatively, you can use the Helm Chart for Apache Airflow to run open source Airflow within a local Kubernetes cluster. Use the steps below to quickly set up a local environment for the KubernetesPodOperator using the Astro CLI. Setting up your local environment to use the KubernetesPodOperator can help you avoid time consuming deployments to remote environments. For more information, see Run the KubernetesPodOperator on Astro. On Astro, the infrastructure needed to run the KubernetesPodOperator with the Celery executor is included with all clusters by default. You can choose one of the following executors: You don't need to use the Kubernetes executor to use the KubernetesPodOperator. This is commonly the same cluster that Airflow is running on, but it doesn't have to be. You also need an existing Kubernetes cluster to connect to. Review the Airflow Kubernetes provider Documentation to make sure you install the correct version of the provider package for your version of Airflow. To use the KubernetesPodOperator you need to install the Kubernetes provider package. To get the most out of this guide, you should have an understanding of: You'll also learn how to use the KubernetesPodOperator to run a task in a language other than Python, how to use the KubernetesPodOperator with XComs, and how to launch a Pod in a remote AWS EKS Cluster.įor more information about running the KubernetesPodOperator on a hosted cloud, see Run the KubernetesPodOperator on Astro Assumed knowledge The differences between the KubernetesPodOperator and the Kubernetes executor.How to configure the KubernetesPodOperator.The requirements for running the KubernetesPodOperator.By abstracting calls to the Kubernetes API, the KubernetesPodOperator lets you start and run Pods from Airflow using DAG code. The KubernetesPodOperator (KPO) runs a Docker image in a dedicated Kubernetes Pod.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed